Immediate runs the scheduler as soon as the Redshift migration is triggered.

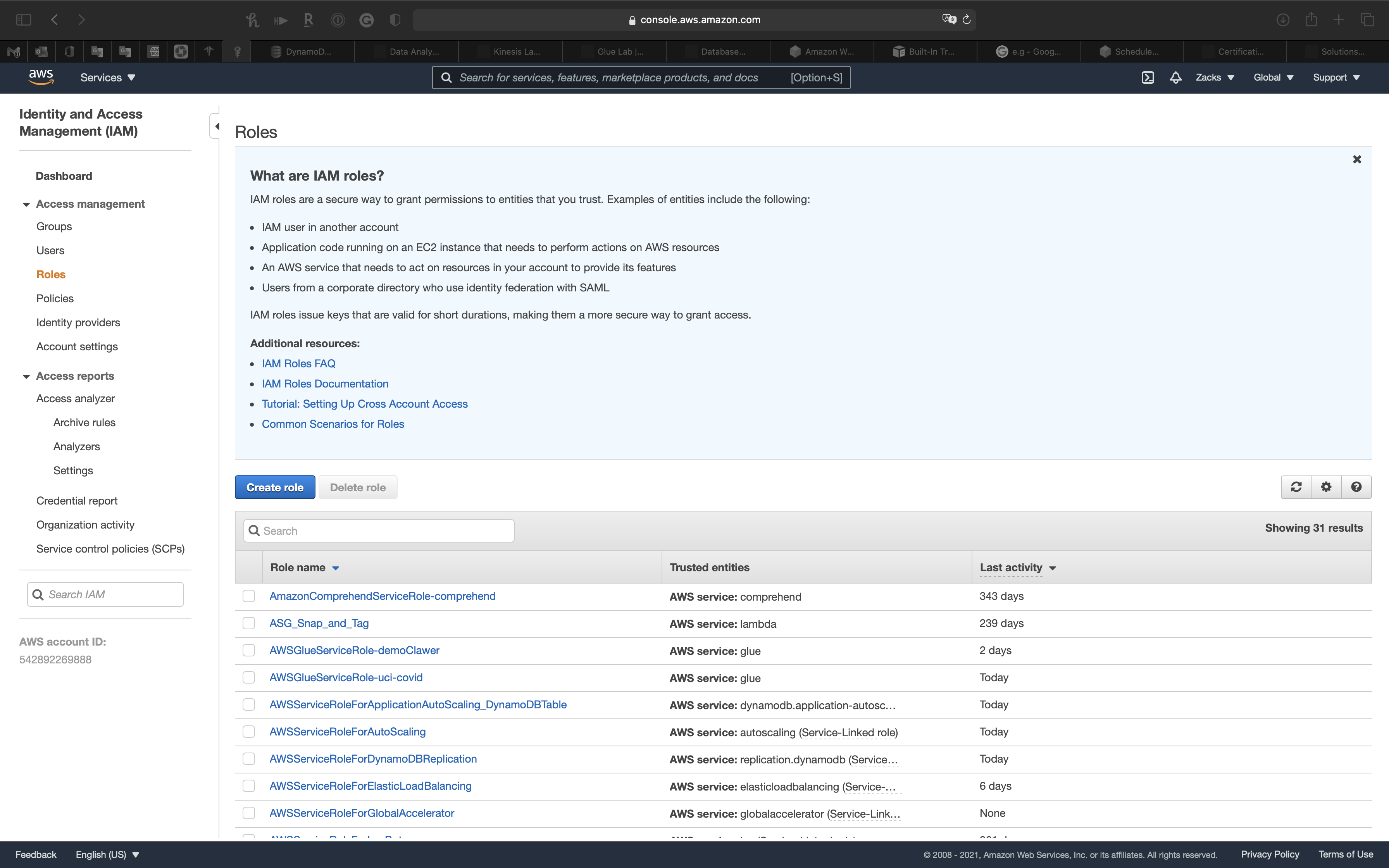

To monitor the data load scheduled jobs, use the Scheduler node in the Connections navigator. You have the option to run the scheduled jobs immediately or at a future date and time. You can schedule jobs for data load migration operations from the AWS S3 bucket to Autonomous Database. Use Scheduler: Select this option to enable the scheduler for migration. Maximum Number of Threads: Enter the number of parallel threads to enable when loading data to tables in Autonomous Database. Output Directory: Enter the path or click Select Directory to select the directory or folder for the migration. S3 Bucket URI: For information on common S3 ServiceException errors, see S3ServiceException Errors.įor more information an S3 buckets, see Creating and Configuring an S3 Bucket.ĭescription of the illustration adw_migrate_redshift_prefs.png The same access key is used later on to load the data into the Autonomous Database using secure REST requests.ĪWS Access Key: For more information on access keys, see AWS Identity and Access Management.ĪWS Secret Access: For more information on access keys, see AWS Identity and Access Management. When you migrate data, you have to provide the AWS access key, AWS Secret Access Key, and an existing S3 bucket URI where the Redshift data will be unloaded and staged. The security credentials require certain privileges to store data in S3. It is recommended to create new, separate access keys for the migration. Include Data : DDL and DATA migrates the selected schemas and data. Selected Schemas : Click the Add icon to select the schemas you want to migrate from Available Schemas. In the AWS Redshift Migration, specify the Connection.ĭescription of the illustration adw_migrate_redshift_step1of3.pngĬonnection : Name of the Redshift database connection.Īvailable Schemas : Schemas available for the specific connection.

Schemas cannot be renamed as part of the migration. Migration to Autonomous Database is on a per-schema basis. All objects, mainly tables, in the schema will be migrated. Identify the schemas in the Amazon Redshift database to migrate. If your Amazon Redshift client connection to the database appears to hang or times out when running long queries, see for possible solutions to address this issue. See for details of how to enable public Internet access. If you deployed your Amazon Redshift environment within a Virtual Private Cloud (VPC) you have to ensure that your cluster is accessible from the Internet. "Amazon connection error"), see AWS: Configure Security options for Connection ( inĬase of "Amazon connection error").

If you are planning to migrate multiple schemas it is recommended to connect with the Master username to your Amazon Redshift system.ĭescription of the illustration adw_migrate_aws_connect1.pngįor more details for configuring a JDBC Connection and obtaining the Amazon Redshift JDBC URL, see AWS: Configure a JDBC Connection.įor more details for configuring security options for the connection ( in case of Select the Amazon Redshift tab and enter the connection information for Amazon Redshift. In the Connections panel, right-click Connections and select New Connection. Select * from user341.Add Connection to Amazon Redshift Database Is there a way to only grant users to specific views, and make it so they can’t see other schemas they don’t have access to?Ĭreate view tst_user_schema.inventory_report_customer as ( Also the user can see other schemas in the database, even ones they can’t query. The issue I have is that I also have to grant access to the underlying schema the view is created from, which means the user can see and query tables in that schema. I have example code below that I use to create the user, view, and grant access. I want to create database users who can only query and see certain views created specifically for them in Redshift. I’m pulling data from mysql ec2 instances, to s3 buckets, then creating views in redshift.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed